LiDAR is an acronym for Light Detection and Ranging. LiDAR is a remote sensing and mapping technology designed to measure the dimensions and topography of terrain and 3D spaces.

While LIDAR has been in development since the 1960s, recent developments in laser sensing and data processing have unlocked the true potential of this remote sensing and mapping technology.

Today, LIDAR is used to sense, map and analyze spaces ranging from the upper parts of the Earth’s atmosphere to busy city streets and colossal rainforests.

What is LIDAR?

LIDAR is a remote sensing technology that determines the 3D characteristics of a target by measuring the time it takes for lasers to reflect into a receiver.

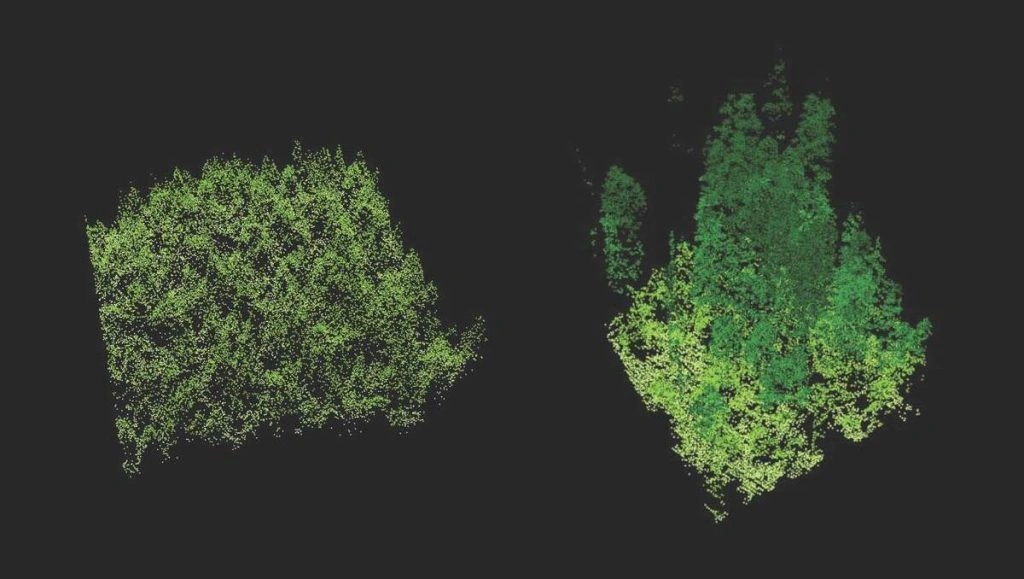

LIDAR sensing builds point clouds, collections of millions, billions or even trillions of 3D X, Y, Z coordinate points.

Data points typically contain other attributes like intensity (the return strength of the laser beam), return number (received when a laser pulse is intercepted on its way to the ground), GPS location, and timestamps. Raw LIDAR data is pre and post-processed to extract features and create accurate 3D terrain maps, often with the assistance of machine learning.

Light Detection and Ranging (LIDAR) is a remote sensing and mapping technology designed to measure the dimensions and topography of terrain and 3D spaces.

While LIDAR has been in development since the 1960s, recent developments in laser sensing and data processing have unlocked the true potential of this remote sensing and mapping technology.

Today, LIDAR is used to sense, map and analyze spaces ranging from the upper parts of the Earth’s atmosphere to busy city streets and colossal rainforests.

Modern LIDAR systems can emit at least 160,000 pulses a second, ranging up to 500,000 in sophisticated systems. At the lower end of that scale, each 1m2 section still receives around 15 pulses, hence why large LIDAR datasets can easily contain millions of data points and are accurate to distances of less than 10cm. Additionally, LIDAR can target virtually any 3D object, including clouds, gasses, aerosols or even singular molecules.

LIDAR sensors are often twinned with hyperspectral cameras, combining LIDAR data clouds with other electromagnetic data to create stunningly detailed maps of complex topographical areas.

The result is a state-of-the-art remote sensing and imaging technology suited to a number of existing, new, novel and cutting-edge applications.

What is LIDAR Used For?

LIDAR is a laser-based imaging or mapping technology that excels at mapping objects in 3D spaces.

LIDAR is flexible and scalable – you can use just one laser or many lasers working in tandem to scan objects at close or far range. Since lasers retain their integrity over long distances, LIDAR is efficient at mapping large areas in a short space of time.

In addition to large-scale applications, LIDAR is now employed in handheld devices. For example, smartphones and handheld imaging devices are equipped with LIDAR to measure the distances between different objects to create better images.

LIDAR Use Cases

LIDAR systems are employed across both large-scale commercial and small-scale consumer settings. While LIDAR systems were formerly large and expensive, the cost of technology is falling, and portability is increasing, enhancing the appeal of LIDAR as a remote sensing technology.

Here is a selection of key LIDAR use cases:

1: Environmental

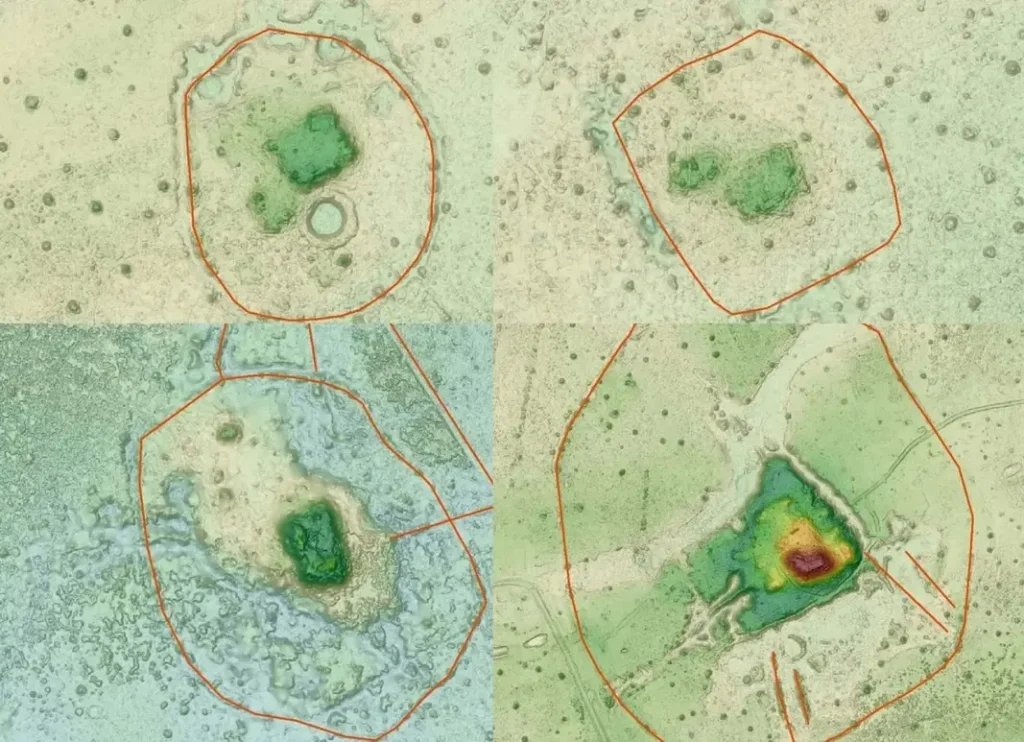

LIDAR excels as a terrain mapping technology, helping researchers build accurate 3D topographical maps.

One of the most valuable aspects of LIDAR is that it penetrates tree canopies and the water’s surface. The lasers bounce off leaves, twigs, trunks, etc., until they finally hit the ground.

LIDAR provides a data point called the “return number,” which measures how many times the laser reflects back and forth before it hits the ground. Return data can help researchers estimate canopy height and other vegetation characteristics. When combined with image data, LIDAR can accurately discern healthy trees or foliage from unhealthy trees or foliage across different species.

Satellite imagery and RADAR aren’t capable of this, making LIDAR far superior for mapping complex 3D environments.

LIDAR has been used extensively in mapping vegetation, as it provides data on:

- Canopy height

- Canopy density and cover

- Leaf area index, which predicts the amount of leaf material in a canopy

- Vertical forest structure, predicting the number of stories in a tree canopy and their height or proportion

- Species identification, when LIDAR data is compared between different types of forests (e.g., many typical deciduous and coniferous forests will create different data points)

Land Use and Evolution

LIDAR is used to analyze plate tectonics, study landscape evolution, and measure the impact of events like landslides.

LIDAR is helping scientific researchers study our planet’s past, present, and future direction. Researchers have also used LIDAR to identify land uses and discover archaeological sites. For example, LIDAR was used to map the lost settlement of Llanos de Mojos in the Bolivian Amazon, abandoned some 600 years ago.

Bathymetric LIDAR, which penetrates the water’s surface, has been used to map marine habitats, glacial ice, and submarine atmospheric conditions.

Space

NASA used LIDAR on Mars to map topography from a rover and analyze the earth’s atmosphere as long ago as 1994 when a LIDAR device was mounted to Space Shuttle Discovery.

2: Urban

LIDAR is employed in numerous urban settings, helping municipal managers measure traffic, footfall, and other variables to plan city layouts and monitor public safety.

For example, LIDAR monitors highways in France and other countries, facilitating smart traffic lights that adapt to traffic conditions. Similarly, LIDAR analyzes streets in Busan, S. Korea, to unlock insights into pedestrians, speeding vehicles, and traffic.

Indoor Mapping

Another recent development is using LIDAR indoors to create 3D indoor maps. For example, the National Institute of Standards and Technology in the USA is experimenting with LIDAR to create on-demand 3D maps of buildings to provide firefighters and other safety or law enforcement professionals with accurate interior maps. Similar applications help military and law enforcement map urban areas to plan operations.

Interior LIDAR sensors are used underground, enabling mining companies to map the topography of subterranean environments.

3: Technology

LIDAR helps autonomous vehicles react to the environment with the assistance of other computer vision technologies. While Tesla announced that they wouldn’t pursue LIDAR for their vehicles, favoring RADAR and cameras, many companies have successfully integrated LIDAR into AVs.

This is an economic decision rather than a performance decision – LIDAR is considerably more expensive than RADAR. However, this is likely to change in the future – advanced car-mountable sensors such as Velodyne’s Alpha Prime are equipping cars with advanced 3D spatial awareness beyond RADAR.

In drones and other AVs, LIDAR is used for many novel purposes. For example, the Technical Research Centre of Finland (VTT) uses LIDAR in conjunction with drones and computer vision to inspect deep-sea oil rigs, pipelines, and other infrastructure.

How Does LIDAR Work?

LIDAR devices utilise the following basic process:

- The LIDAR laser device emits signals

- Those signals, or pulses, reach obstacles

- The signal then reflects off 3D obstacles and features, often multiple times, until it reaches the ground. Not all signals will reach the ground.

- Signals return to the receiver, which parses the data

- Pulses are assigned timestamps and location and are then represented in a LIDAR point cloud

- Data is post-processed, often using AI and machine learning to classify objects

To achieve this, modern LIDAR systems consist of at least at least four key elements:

- The laser device: The laser emits pulses of light, which scatter across the environment. Some emitters feature just one laser, whereas others feature hundreds. Depending on the application, the light used in LIDAR is either UV, near-infrared or visible light.

- Scanner: This distributes the beams and regulates the rate and distance at which they scan the environment. Different scan directions or angles are necessary for different applications.

- Sensor: The sensor registers the reflections produced by each pulse. These are called returns. There may be a single return (e.g., when the laser bounces straight off the ground) or multiple returns (e.g., the laser rebounds off multiple objects, such as a building or tree).

- GPS: The GPS device tracks and logs the location of the LIDAR system, also helping validate the distances between objects with satellite data.

Data is gathered in a point cloud – a vast collection of data points that forms a 3D pattern. While some LIDAR systems incorporate machine learning processors in-line with the data collection process, LIDAR data is usually post-processed. Once the point cloud is plotted, it can be analyzed manually or automatically.

Unsupervised and semi-supervised machine learning can work to extract and classify features from LIDAR datasets.

LIDAR data can also be labeled by hand to train precise supervised models or fed back into semi-supervised classification models to improve their performance.

The Main Types of LIDAR

LIDAR is split into two main categories:

- Airborne LIDAR

- Terrestrial LIDAR

1: Airborne LIDAR

Airborne LIDAR is mounted on planes, drones, or other flying vehicles. Aerial LIDAR can map vast areas within mere minutes, producing accurate maps of 3D terrain for processing and analysis. There are two types of airborne LIDAR:

- Bathymetric: Primarily uses green light to penetrate water bodies.

- Topographic: Uses various frequencies of visible, infrared, and UV light to measure topographic 3D features.

Airborne LIDAR is often combined with other imaging and remote sensing technologies, such as high-definition cameras and hyperspectral, multispectral, and thermal sensors.

2: Terrestrial LIDAR

Terrestrial LIDAR is installed in land vehicles, infrastructure, or handheld devices. These systems are usually designed to scan in all directions, creating 3D models to feed to other sensors or technologies.

There are many compact terrestrial LIDAR systems – you can even find LIDAR technologies in some cameras and smartphones. The iPhone 12 Pro’s LIDAR sensor enables for 6x faster low-light autofocus and advanced AR capabilities.

Terrestrial systems are either:

- Mobile: For example, attached to cars or other vehicles or installed into a handheld device.

- Static: Fitted to a fixed point, e.g., the side of a building.

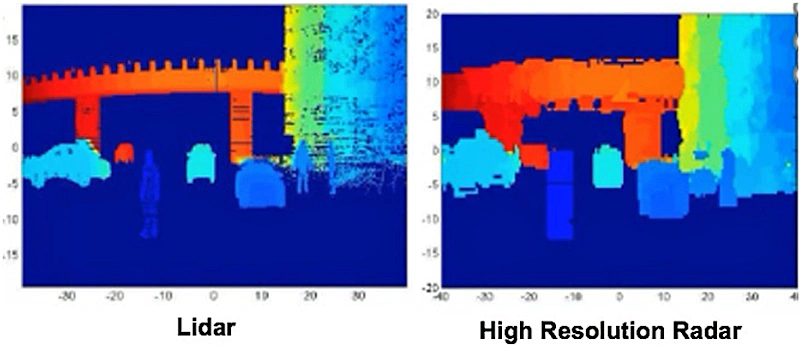

LIDAR vs RADAR

Two comparable remote sensing technologies are RADAR and LIDAR.

RADAR systems send and receive radio waves that locate objects in their range, thus providing a means to position 3D objects. On the other hand, LIDAR uses shorter wavelength light between the wavelengths of 500 and 1600nm.

Since RADAR uses radio waves, it has a significantly longer range than the shorter-range visible and near-visible light used in LIDAR. However, the RADAR range comes at the sacrifice of granularity and accuracy.

Broadly speaking, RADAR is excellent at detecting larger objects at distances ranging up to 500km or further. On the other hand, long-range LIDAR systems operate accurately at a max range of around 250m.

LIDAR creates a more accurate 3D image with thousands, millions, or even billions or trillions of data points, called a point cloud. RADAR cannot generate accurate 3D data in the same way as LIDAR.

Uses

- LIDAR: Short-range, ultra-detailed 3D mapping from either aerial or terrestrial positions and angles.

- RADAR: Less accurate 3D positioning, but at a much greater range than LIDAR.

Accuracy

- LIDAR: High accuracy, providing some 10 to 100 data points per meter squared.

- RADAR: Lower accuracy, suitable for large object detection without accurate 3D mapping.

Range

- LIDAR: Low range of just 250m or so, due to the use of visible and near-infrared light.

- RADAR: Long wavelength radio waves yield effective ranges of over 500km in some systems.

Cost and Implementation

- LIDAR: Most sophisticated and costly.

- RADAR: Cheaper technology, currently more viable for mass production.

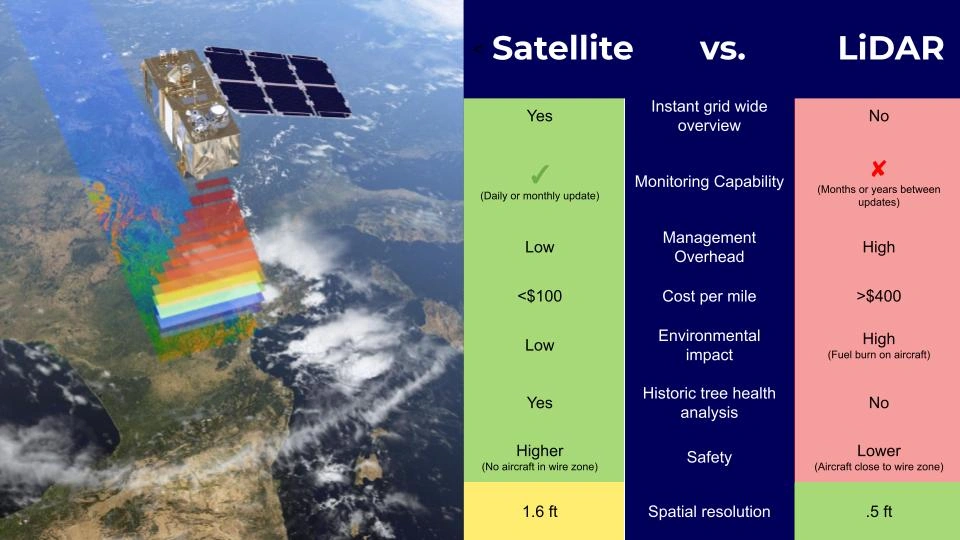

LIDAR vs Satellites

Another remote sensing technology comparable to LIDAR is satellite imagery.

Many people are familiar with satellite imagery through applications such as Google Earth, which combines stereo satellite imagery with images taken from aircraft and GIS data to create 3D maps.

Stereo satellite imagery combines two images of the same location from different angles, creating a 3D shape. Tri-image stereo satellite imagery is similar, combining three images for accurate 3D mapping. While this generally creates excellent results, stereo satellite imagery is most effective at mapping definitively shaped objects like buildings.

For example, if you look at Google Earth, you’ll find that urban areas with definitively shaped 3D objects are well-mapped, whereas other terrain features such as trees are poorly mapped. Stereo satellite imagery isn’t particularly effective at estimating vegetation or slope topography, which is where LIDAR excels. In addition, satellite imagery can’t penetrate semi-transparent surfaces or map 3D height without clear sight of the ground.

As we can see from the above, LIDAR penetrates tree canopies and doesn’t discriminate the shape of a 3D object, making it much more reliable for mapping 3D features, including the height of semi-opaque terrain features such as trees. Finally, LIDAR provides data points, whereas satellite imagery does not contain a point cloud or anything similar.

Broadly speaking, satellite imagery is much more efficient when mapping large areas with consistent monitoring since the satellite can make multiple passes per week, month, etc. On the other hand, LIDAR is smaller-scale but significantly more accurate.

Overall, you could argue that LIDAR is a data-oriented remote sensing technology, whereas satellite imagery is an image-oriented remote sensing technology.

Uses

- LIDAR: Mapping complex 3D objects with semi-opaque and semi-transparent features such as foliage.

- Satellites: Macro-level imaging of larger features; able to render larger or more geometrically defined 3D objects using tri-image stereo imaging.

Accuracy

- LIDAR: High accuracy, providing 10 to 100 data points per square meter.

- Satellites: Accurate, but doesn’t provide detailed data for smaller sections of land; better for wider spatial imaging rather than 3D mapping.

Range

- LIDAR: Low range of just 250m or so, due to the use of visible and near-infrared light. Ongoing monitoring is complex, except when LIDAR is mounted on lightweight drones.

- Satellites: Can photograph huge areas; images can be updated for consistent monitoring of large areas.

Cost and Implementation

- LIDAR: Costly, but not comparable to satellites.

- Satellites: Expensive, but plenty of open-source satellite datasets are available.

LIDAR and Computer Vision

Computer vision (CV) is a branch of machine learning, equipping robots and AIs with visual capabilities. AI technologies such as CV synchronize well with remote sensing technologies like LIDAR.

For example, computer vision is often coupled with LIDAR or RADAR, enabling AVs to detect 3D objects at the range, speeding up their reaction times and making them much safer.

LIDAR provides excellent detail of 3D objects at range, but complex objects cannot always be classified from LIDAR data alone. On the other hand, computer vision enables better object recognition but fails to provide high-resolution range information. Coupling the technologies solves both limitations – these “tightly coupled LIDAR and CV systems” share low-latency data between both LIDAR and computer vision systems, facilitating the real-time processing of LIDAR data.

Outside the consumer AV space, LIDAR is often coupled with computer vision to create high-definition 3D maps that combine HD videos and images with detailed LIDAR data points. As such, LIDAR and CV are naturally synergistic in the geographic information system (GIS) industry.

LIDAR, AI, and Machine Learning

Computer Vision (CV), deep learning, and artificial intelligence (AI) are used to solve the challenge of interpreting and analyzing image data, including LIDAR point clouds. LIDAR is exceptionally detailed, but since it provides thousands or millions of data points, processing is time-consuming and laborious.

Random forest, support vector machines, and gradient boosting trees are three supervised learning techniques that classify LIDAR features at scale. These techniques are suitable when a data labeling team can accurately label a small portion of LIDAR data, thus providing a target for the algorithms to act upon for the rest of the data.

Automated or semi-automated LIDAR classification helps build complex high-definition 3D maps from LIDAR and other data. For example, the geographic information system (GIS) provider ESRI used machine learning to replace 50,000 labor hours by automating the detection of utility poles and power lines.

Summary: Complete Guide to LIDAR

LIDAR is an advanced remote sensing technology that intercepts a whole host of industries, sectors and other technologies. As of 2022, LIDAR is becoming more portable, making it viable for mass-produced consumer devices such as smartphones.

While LIDAR is comparable to RADAR and satellite imagery, it’s not the same. LIDAR is capable of producing detailed 3D maps of complex objects, such as trees and other landscape features. Modern LIDAR can even sense aerosols and high concentrations of gasses.

Labeling LIDAR is time-consuming but can be accelerated using supervised and semi-supervised automated labeling techniques. LIDAR labeling requires considerable labeling expertise and domain specialism, combined with cutting-edge tools – Aya Data’s LIDAR labeling specialists have proven experience and access to state-of-the-art labeling tools and workflows.

Frequently Asked Questions

What is RADAR LiDAR technology for railway applications market?

RADAR and LiDAR technologies for railway applications are advanced sensing systems used to enhance safety, automation and operational efficiency in rail transports.

What LiDAR & Radar annotation services does Aya Data offer?

Aya Data provides high-precision annotation for LiDAR point clouds and radar signals, including 3D bounding boxes, semantic segmentation and object tracking tailored for autonomous vehicles, railway safety, autonomous trains and infrastructure inspections.

Can Aya Data handle large-scale LiDAR/Radar datasets for railways?

Yes, Our scalable pipelines process terabytes of sensor data from trains, tracks and vehicles with tools optimized for point cloud compression and real-time annotation.

How does Aya Data ensure accuracy in sensor data labeling?

We combine AI pre-labeling with human-in-the-loop validation, strict QA protocols, and domain expertise in transportation to deliver >99% accuracy, compliant with ISO autonomous vehicles and railway standards.

Why choose Aya Data over other annotation providers?

A. Railway-specific expertise (e.g: understanding ETCS signaling data)

B. Security-compliant workflows (GDPR, ISO & AICPA-SOC )

C. Fast turnaround with SLA-bound delivery.